Pipeline Basics

Introduction

To create a better understanding of Party Bus' pipeline flow, we have put together the following information for Application Teams and Platform One (P1) Teams.

Software Versioning

Party Bus uses the most recent version of software whenever available. However, this may impact how individual pipelines work, requiring product teams to make adjustments. Every effort will be made to give product teams advance notice of changes such as these - however, this is a dynamic environment. Product teams should make an effort to run as much of the pipeline locally before any code is committed.

Modifications

Any modification of jobs and stages in the Party Bus pipeline must be approved by MDO/Cyber.

Jobs and pipeline stages will be updated or added when necessary to improve the overall security and quality of the images being produced.

Checks - Best Practices

Whenever possible, product teams should test changes locally before making commits. This helps to catch obvious errors, minimize excessive commits, reduce usage of shared resources, and test changes more quickly. This is particularly key for the build, lint, and unit test jobs, but can also be done with the dependency check, SonarQube, TruffleHog, build image, and end-to-end (E2E) test jobs (the GitLab SAST, TruffleHog, and Anchore jobs cannot be replicated locally).

This guide explains how to recreate the Dependency Check job locally, but it can be applied to the other jobs, as well.

NOTE

Pipeline capabilities for checks should be considered a safety check, not a first check.

NOTE

CI/CD pipelines are a tool to automate the software delivery process. Developers are expected to do their due diligence to verify results.

Party Bus Pipelines

Deployment Pipelines

Deployment pipelines generate a single Docker image to be deployed as part of an application.

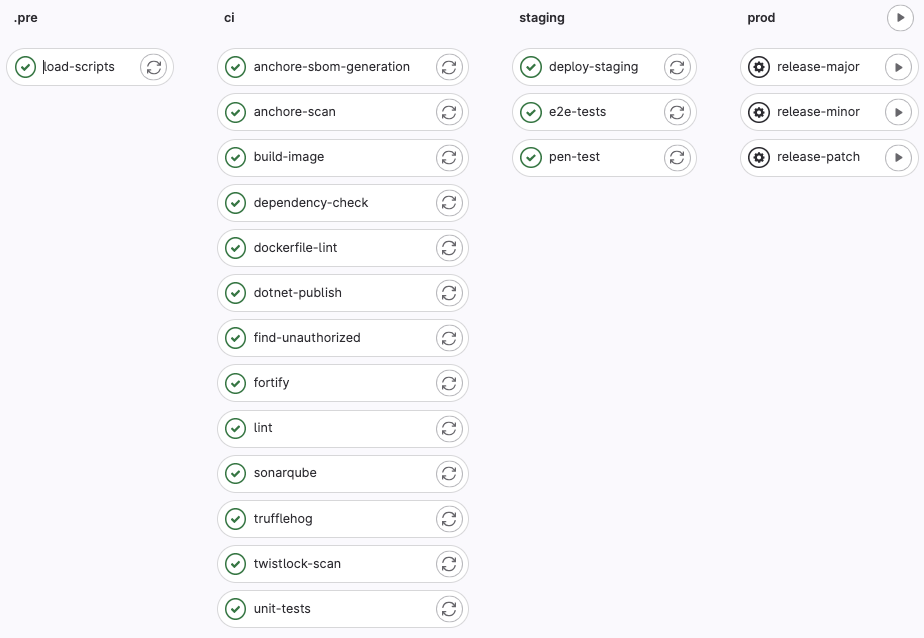

The pipeline stages and jobs:

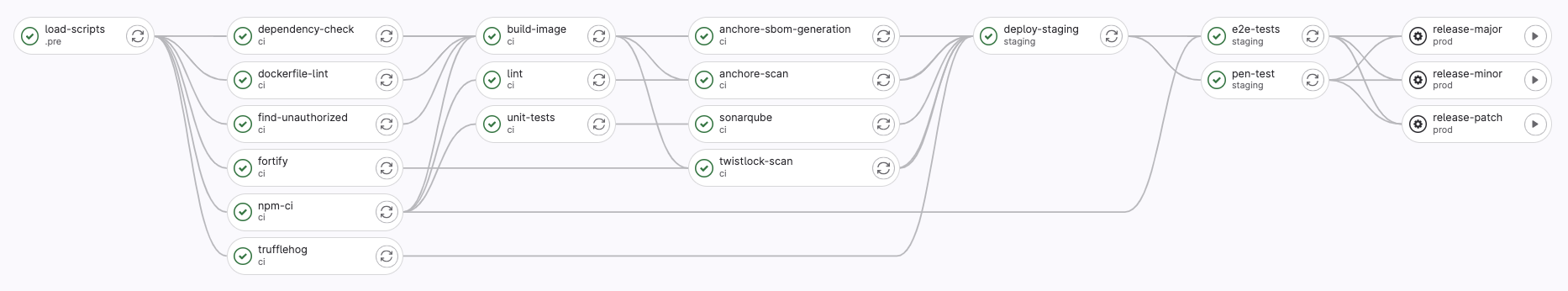

The job dependencies (NOTE: in the pipeline view, group jobs by job dependencies and show dependencies):

Package Pipelines

Package pipelines generate a package that will be uploaded to the project's Package Registry. It is typically used to build a common/core set of dependencies that will be used by other pipelines.

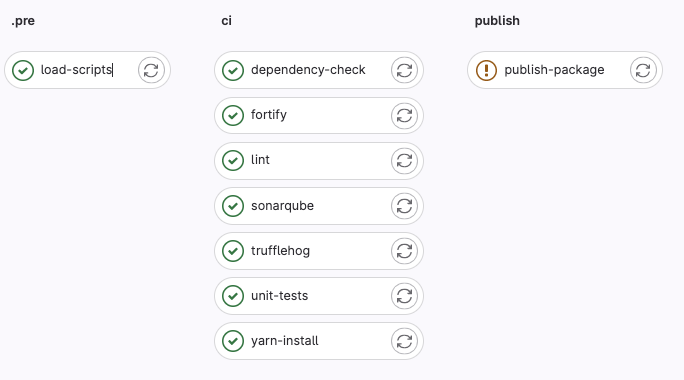

The pipeline stages and jobs:

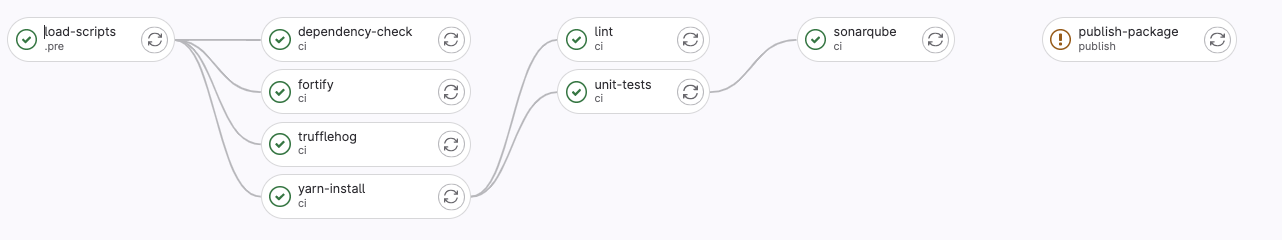

The job dependencies (NOTE: in the pipeline view, group jobs by job dependencies and show dependencies):

Pipeline Jobs

Pre

load-scripts

load-scripts

The load-scripts job loads required scripts into the GitLab runner environment. The scripts can be found in pipeline-templates.

CI

anchore-sbom-generation

anchore-sbom-generation

The anchore-SBOM-generation job creates a Software Bill of Materials (SBOM), which is an artifact that provides a more complete picture of installed software. The SBOM will be used as a part of the anchore-scan job.

Details

This job is solely responsible on Mission DevOps. If there is a failure, report it at Pipeline Issues .

anchore-scan (may contain customer-actionable findings)

anchore-scan

The anchore-scan job scans containers for Common Vulnerabilities and Exposures (CVEs) as defined by the National Vulnerability Database.

NOTE

This job only runs the scan; if it fails, report it at Pipeline Issues .

Findings (pass/fail) are documented in the anchore-twistlock-results job.

anchore-twistlock-results (may contain customer-actionable findings)

anchore-twistlock-results

The anchore-twistlock-results job shows the combined output of the anchore-scan and twistlock-scan jobs.

Findings will need to be remediated by the product team, or an Anchore/Twistlock ticket can be opened to request an exception with appropriate justification.

Exceptions for CVEs in base images are granted automatically.

Details

Note

Images must use the latest version of the base image or inherited findings will not be excluded automatically. Versions can be checked in Iron Bank Harbor.

build-image

build-image

The build image job builds the Docker image that will deployed. It reads the Dockerfile located at the root of the source code repository.

clamav-scan (may contain customer-actionable findings)

clamav-scan

The clamav-scan job scans containers for malware and viruses using clamscan.

Any files flagged as malware will need to be removed from the image.

Details

P1 uses a python script in pipeline-templates to run the clamscan command provided by ClamAV. These are the general steps of the script P1 uses to perform ClamAV scanning:

- Image layers are downloaded as tar archives using skopeo.

- ClamAV Virus Database (CVD) files are fetched from a package repo in the pipeline-templates project.

- Downloaded image layer archives are scanned using clamscan.

- ClamAV output is written to a report called clamav.txt that is saved as a job artifact.

- Output and status code are used to determine if there are findings, un-scanned files, or if the scan passed cleanly.

dependency-check (may contain customer-actionable findings)

dependency-check

The dependency-check job scan the dependencies for security vulnerabilities.

Who Does this?

MDO implements, but product teams fix identified security vulnerabilities.

Details

- Uses the dependencies artifact from the build job.

- Scans all dependencies against a CVE database.

- Results can be found in the SonarQube Web GUI under a separate "dependencies" project.

Common Errors

Any findings that are false positives can be reported through a Pipeline Exception Request . For each finding, justification will need to be provided as a comment. The assigned Cyber engineer will review and grant the exception if the justification is valid. A single request can be made for multiple findings.

dockerfile-lint

dockerfile-lint

The dockerfile-lint job checks a Dockerfile for style, format, and security issues. It will check only the Dockerfile at the root directory of the repository. This Dockerfile will be used to generate the Docker image in the build-image stage.

Details

P1 uses a Hadolint Iron Bank image, combined with the appropriate Impact Level's (IL's) container registry. Registries include the following:

NOTE

Issues labeled as Info severity should be addressed but will not block the pipeline; Warnings and Errors will block the pipeline.

Common Errors

Errors that occur in this job can be looked up on the Hadolint GitHub page, which provides links to examples of bad and fixed code.

Last user of a Dockerfile should be non-root.

Every stage of your Dockerfile should end with a non-root user. This includes intermediate stages in a multi-stage build. USER root can be used if you switch to another user before exiting the stage.

Example:

FROM image1

USER root>

COPY . .

USER node # <--- add this FROM image2 CMD [ "npm", "start" ]fetch-latest-release

fetch-latest-release

The fetch-latest-release job is an automated task that retrieves the most recent version of a project by refencing the latest Git tag. It ensures consistent, traceable release creation whenever a new tag is pushed.

fetch-release-artifacts

fetch-release-artifacts

The fetch-release-artifacts job retrieves an archive of files and directories related to the job.

find-unauthorized

find-unauthorized

The find-unauthorized job will check a Dockerfile for any unauthorized commands and unauthorized registries. It will check the Dockerfile at the root directory of the repository. This Dockerfile will be used to generate the Docker image in the build-image stage.

Details

Only Authorized Registries

The only authorized registries for pulling images are from Iron Bank and the MDO-managed pipeline-template registries (https://registry.il2.dso.mil/, https://registry.il4.dso.mil, https://registry.il5.dso.mil). Dockerfiles pulling from other registries will fail.

Unauthorized Commands

Commands that require external web access are not allowed in the Dockerfile. This includes commands that install dependencies. Any dependencies need to be brought in during the pipeline's build job – not build-image. The dependencies will be cached and saved as artifacts to be used throughout your pipeline. They will be available in the GitLab runner environment. They must be scanned for vulnerabilities before they are allowed to be deployed to P1 resources.

To bring dependencies into your Docker image, use the COPY directive to copy files from the GitLab runner environment into the container.

Commands that update packages at the OS-level, e.g., yum, dnf, wget, cannot be brought in as artifacts. They must be installed on the base image. Product teams should try to find pre-approved Iron Bank images with these packages already installed. Request a new Iron Bank Image New Iron Bank images can be requested here .

We also do not allow commands that modify users.

The list of unauthorized commands can be found here: unauthorized commands

- adduser

- apk

- apt

- apt-get

- bundle add

- bundle install

- bundle update

- curl

- dnf

- dotnet publish

- g++

- gcc

- gem install

- get

- go build

- go get

- gradlew build

- gradlew assemble

- hadolint ignore

- install

- make

- mvn install

- mvn package

- npm ci

- npm i

- npm install

- pip install

- pipenv

- rpm

- useradd

- wget

- yarn add

- yarn install

- yum

Running Locally

Prerequisites:

- Pipeline-templates access. The Reporter role is sufficient.

- registry1 access

- Docker

Steps:

Clone pipeline-templates.

Run this Docker command:

docker run -it --rm -w /app -v < path_to_repo >:/app -v < path_to_dir_with_pipeline-templates >/pipeline-templates/scripts:/scripts registry1.dso.mil/ironbank/opensource/python/python39:v3.9.6 bash- Once in the Docker container, run these commands:

cp /scripts/Dockerfile-scan/cmdscan/\* /app cd /app python cmdscan.py Dockerfile unauthorized.jsonCommon Errors

The Dockerfile cannot be found

The Dockerfile must be at the root directory of the source code repository. It must be named Dockerfile (no affixes).

gitlab-advanced-sast (may contain customer-actionable findings)

gitlab-advanced-sast

The gitlab-advanced-sast job executes GitLab’s Static Application Security Testing (SAST) scanner. GitLab SAST automatically scans source code for vulnerabilities during development. It is integrated into the CI/CD pipeline and runs with each commit to provide immediate feedback.

NOTE: This job only runs the scan; if it fails, report it at Pipeline Issues

Please read Sonarqube and GitLab SAST for more details.

lint

Lint

The lint job runs a linter specific to your project's language, e.g., pylint, eslint, golint. Alternate linters can be requested barring any complications in implementing them. Configuration of the linter is managed through a config file. Product teams are free to configure the rules and whitelists as desired.

Failed Job

This job is provided as a convenience for product teams. It is not required for a Certificate to Field (CtF) and a failed status will not block the pipeline.

Details

For further details, please refer to our HowTo - Requirements - Linting document.

build

build (mvn-package, pip-install, yarn-install, gradlew-assemble, composer-install-prod, composer-install-dev, go-build, npm-ci, bundle-install, dotnet-publish, build-cpp)

These jobs install code dependencies through language-specific package managers (e.g., pip, npm, and bundle). Below is a list of languages supported by P1 and their corresponding job:

- CPP: build-cpp

- Dotnet: dotnet-publish

- Go: go-build

- Gradle: gradlew-assemble

- Maven: mvn-package

- NPM: npm-ci

- PHP: composer-install-prod

- Python: pip-install

- Yarn: yarn-install

TIP

Dependencies installed during the build job should be copied into your Dockerfile. Dependencies should not be reinstalled during the Docker build.

Pipelines are configured to automatically install dependencies based on a package dependency file, (e.g., package.json, requirements.txt, and go.mod). Pipelines are designed to support one package manager. If dependencies from multiple package managers are needed, the repository needs to be broken up; package pipelines can be built to package those dependencies and upload it to the project's container registry.

Details

Please review this document for more in-depth information about build job dependencies: KB - GitLab - Build Job

Common Errors

Old dependencies or dependency versions found in project after updating the package dependency file.

The runner cache may be need to be cleared. On the pipelines page, click the [ Clear runner caches ] button.

p1-custom-sast (may contain customer-actionable findings)

p1-custom-sast

The p1-custom-sast job is used to perform custom security scans on software build artifacts that may not be included in the respective image build. Findings (if any) will appear in the project vulnerabilities as Critical Severity findings. Example checks include scanning a dependencies code for indicators of supply chain compromise.

sbom-diff

The sbom-diff job compares the current SBOM with the previous version to help identify any changes or updates made to the software components.

semgrep-sast

semgrep-sast

The semgrep-sast job executes Gitlab's SAST scanner.

NOTE: This job only runs the scan; if it fails, report it at Pipeline Issues .

Please see Sonarqube and Gitlab SAST for more details.

sonarqube (may contain customer-actionable findings)

sonarqube

SonarQube is a static code analyzer (i.e., SAST) that checks for security vulnerabilities and general code quality issues. It will also process the code coverage report produced by the unit-tests job.

Details

Please review this document for more information: How To - Requirements - Sonarqube/GitLab SAST.

Common Errors

The code coverage is checked in here, but the code coverage report is generated during the unit-test job. Verify it was generated and saved off as an artifact.

Any findings that are false positives can be reported through a Pipeline Exception Request . For each finding, justification will need to be provided as a comment. The assigned MDO engineer will review and grant the exception if the justification is valid. A single request can be made for multiple findings.

sonarqube-metrics

sonarqube-metrics

The sonarqube-metrics job analyzes the code quality of the project by checking the codebase for various SonarQube metrics.

trufflehog

trufflehog

The trufflehog job runs the TruffleHog scanning tool to identify potential sensitive data in your repository (e.g., passwords and certificates). Product teams should use passwords for their production environment and should never commit plaintext into their repo.

Who Does this?

MDO adds the exceptions for false positives in the product YAML.

Details

There is currently no user interface for TruffleHog. Findings will be reported in the pipeline job output as well as being saved off as an artifact.

Common Errors

Refer to the troubleshooting page.

twistlock-base-image-scan

The twistlock-base-image-scan job is used to scan the base image so findings can be used as a whitelist for CVEs discovered by Twistlock in the anchore-twistlock-results job.

twistlock-scan

twistlock-scan

This twistlock-scan job scans the Docker image using Twistlock. It detects CVEs as defined by the National Vulnerability Database, as well as other problems like private keys in the container.

NOTE

This job only runs the scan; if it fails, report it at Pipeline Issues .

Details

Note

Product teams cannot view the Twistlock console. However, they can view Twistlock results in the anchore-twistlock-results job.

Common Errors

See common errors and solutions at: TS - Twistlock - Stage Failure.

unit-tests

unit-tests

The unit-tests job runs small, automated tests focusing on individual components of code. These jobs ensure every code change is validated, resulting in improved code quality.

Details

- P1 requires 80% coverage across all code written by the team.

- A coverage file must be produced by the unit test framework (to be uploaded to SonarQube).

- For npm projects, this job is invoked by running npm run test:unit so you will have to define a test:unit script in package.json.

Common Errors

If the unit-test job fails for any reason, the code coverage reporter will not be produced, and code coverage will be considered 0%.

Unit tests should not require external services (e.g., databases, authentication, and s3 buckets). For reference, refer to the following:

- https://blog.boot.dev/clean-code/writing-good-unit-tests-dont-mock-database-connections

- https://stackoverflow.com/questions/1054989/why-not-hit-the-database-inside-unit-tests

Files not written by the product team can be given exclusions.

Replicating Unit Tests Locally

Product teams may debug unit test errors in pipelines by running them inside the same image that the pipeline uses. To determine the image used by the pipeline, look at the top of the job for the following line:

Using Kubernetes executor with image {image name}verify-base-image

verify-base-image

The verify-base-image pipeline job is responsible for ensuring that the base image used in a CI/CD pipeline is up to date with the latest fixes and improvements.

Staging

boe-release

boe-release

The boe-release job allows customers to create a body of evidence (BoE) to gather all scan artifacts in an archive without needing to perform a full release to production (which creates a GitLab release and BoE archive).

deploy-staging

deploy-staging

Details

The deploy-staging job deploys your application to the P1 staging environment.

Who Does this?

Product team debugs errors in their staging environment as reported by the ArgoCD status script.

Common Errors

< APP > FAILED to deploy in ArgoCD

Visit ArgoCD and confirm the cluster is healthy. The deploy-staging job verifies the health of the entire namespace, i.e., a failed pod for another service will cause the job to report a failure. If assistance is required, create a ticket .

e2e-tests

e2e-tests

The e2e-tests job executes E2E tests.

Who Does this?

Product team creates and fixes E2E errors. NOTE: If your staging code is stored in one IL and deploys to another, you should refer to the "Cross-IL Setups" note, provided here: How To - Requirements - E2E Testing.

How To - Requirements - E2E Testing

Further Details

This job is designed to run Cypress tests by invoking npm run test:e2e-ci.

P1 does not currently have any requirements for what your app needs to test. See this page for more information: How To - Requirements - E2E Testing.

Common Errors

Errors in this job are typically due to E2E test failures. If you cannot determine the cause of the failure, please create a ticket .

generate-summary-report

generate-summary-report

The generate-summary-report generates a summary report based on various SCA scan reports performed within the pipeline.

namespace-health-check

namespace-health-check

The namespace-health-check job validates that the deployment completed successfully, and the application is ready for E2E testing. After the image is deployed to staging, P1 will verify the deployment status using ArgoCD to confirm the staging deployment is fully rolled out, the application is healthy, and all components function correctly.

Details If this job fails, troubleshooting begins in ArgoCD, as it provides the most comprehensive logs and details for deployment failures.

Common Errors

- Misinterpretation of ArgoCD outputs or health status indicators. Refer to the Argo CD Resource Health document to review ArgoCD outputs and health status indicators.

- Unclear remediation path after a failed deployment.

pen-test (may contain customer-actionable findings)

pen-test

The pen-test job is handled by OWASP ZAP. Penetration test reports are uploaded to SonarQube under the zap project. The zap project will be named after the staging URL (e.g., if the staging URL is https://hello-world.staging.dso.mil, the zap project name is hello-world-staging-dso-mil-zap).

Who Does this?

The product team fixes ZAP findings.

Requirements

Requirements for this job have been documented here: How To-Requirements - ZAP Pen Test Job.

upload-staging-sbom

upload-staging-sbom

The upload-staging-sbom job is responsible for uploading the SBOM to Defect Dojo to be ingested by that platform.

Publish

publish-package

publish-package

Some pipelines are setup to publish a code artifact to GitLab instead of deploying a container to Kubernetes. P1 refers to them as "package pipelines." They will generate a package that will be uploaded to the GitLab package registry.

Who Does this?

MDO will create a package pipeline upon request. Versioning is generally taken from the package file used for the repository's technology, such as a package.json or pom.xml file.

Further Details

This job runs only on the default branch.

Common Errors

Packages must have a unique name and version. Duplicate packages will be rejected.

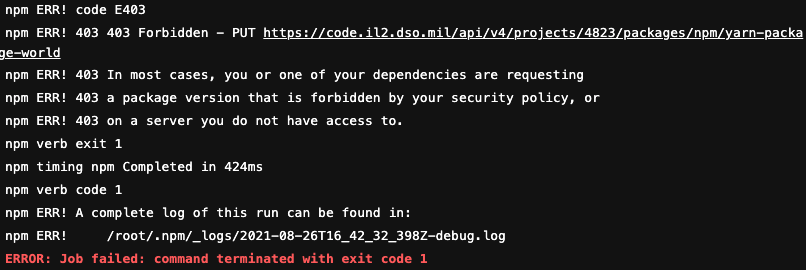

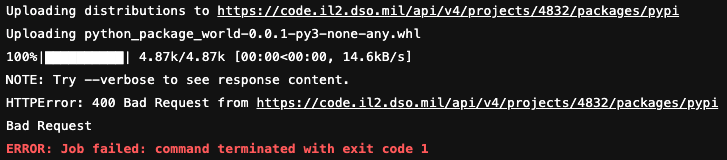

For npm/yarn packages, the error will look similar to:

For python packages, the error will look similar to:

The package version must be incremented to a new and unique version. Packages are uploaded to the GitLab package registry (on the left of the repository in GitLab, click Packages & Registries → Packages).

For versioning, it is recommend to use SemVer spec.

Prod

release-major, release-minor, release-patch

release-major, release-minor, release-patch

After a default branch passes all jobs, the release jobs can be run to cut a new release of the project.

Who Does this?

The product team runs this stage manually when they want to cut a release and/or promote code to production.

Details

To run the release stage, click the cog and select the type of release you want:

release-major will increment the major version: 1.2.3 → 2.0.0

release-minor will increment the minor version: 1.2.3 → 1.3.0

release-patch will increment the patch version: 1.2.3 → 1.2.4

When the release stage succeeds, ArgoCD will automatically deploy the new release to production.

Common Errors

No release for 1.X.X found.

The pipeline has not been configured to allow production deployments yet. A CtF is required for the app. If the app is ready for CtF, the process can be started here .

If a CtF has been approved and a CtF letter signed, MDO must authorize the pipeline. A request can be started here .

If the MDO request has been completed, but the error is still present, verify that a new pipeline was run after the merge request for pipeline-templates was merged. Verify that the release being cut is authorized (CtFs are valid for specific major versions).

Post

release-major-trigger, release-minor-trigger, release-patch-trigger

release-major-trigger, release-minor-trigger, release-patch-trigger

These jobs are responsible for triggering downstream pipeline jobs that handle automated release processes. These automate and standardize the release workflow to ensure version consistency, traceability, and compliance across environments. These jobs perform the following key actions:

- Executes a GitLab release of the specified type by bumping the version tag.

- Pushes the container image under the newly released version.

- Deploys the image to production.

- Generates a corresponding BoE and related release artifacts.

Classified Production Deployments

Engage the ODIN team to setup your IL6/SIPR/AFSCI deployments. Once engaged, the Classified Ops Team will add team members to the ODIN Classified Delivery Mattermost channel.

Risk-Based Deployments

What is Risk-Based Deployments?

Risk-Based Deployments (RBD) is a feature of Party Bus Image Build pipelines that allows customers to incrementally improve the security of their images. If the image a customer wants to deploy presents less risk than the currently deployed image, the pipeline permits the deployment to proceed.

The anchore-twistlock-results job

The main job at the core of RBD functionality is the anchore-twistlock-results job. This job acts as our vulnerability gate-check mechanism for Image Build pipelines and decides whether to allow a customer to deploy the image they built in each pipeline to run in the production environment.

This determination is based on a set of logic that evaluates how much risk is added or reduced by the vulnerabilities present in the image compared to what currently exists in production. This is done by evaluating vulnerability scan results from the anchore-scan, twistlock-scan, twistlock-base-image-scan, and fetch-release-artifacts jobs.

Information is also collected from the fetch-latest-release and verify-base-image jobs.

Additional jobs used and their purpose

The following are additional jobs used to make RBD work, and the information gathered from them is used for the anchore-twistlock-results job.

Required Jobs

anchore-twistlock-results directly depends on information from:

- anchore-scan: Scans the image with Anchore and downloads the vulnerability reports.

- twistlock-scan: Scans the image with Twistlock and downloads the vulnerability reports.

- fetch-latest-release: Calculates the latest release version for the image.

Optional Jobs

Information from the following jobs is optional, but will result in stricter evaluation if artifacts are not present (likely to result in job failure):

- verify-base-image: Used to get the Base Image Uniform Resource Identifier (URI) for the image.

- fetch-release-artifacts: Downloads Anchore/Twistlock vulnerability reports for the most recent release for cross-reference with the current image.

- twistlock-base-image-scan: Scans the base image with Twistlock and downloads the vulnerability reports to use as a whitelist for vulnerabilities discovered by Twistlock in the customer image.

DNS Requests

The MDO Team opens a DNS Request Ticket with Cloud Native Access Point (CNAP).